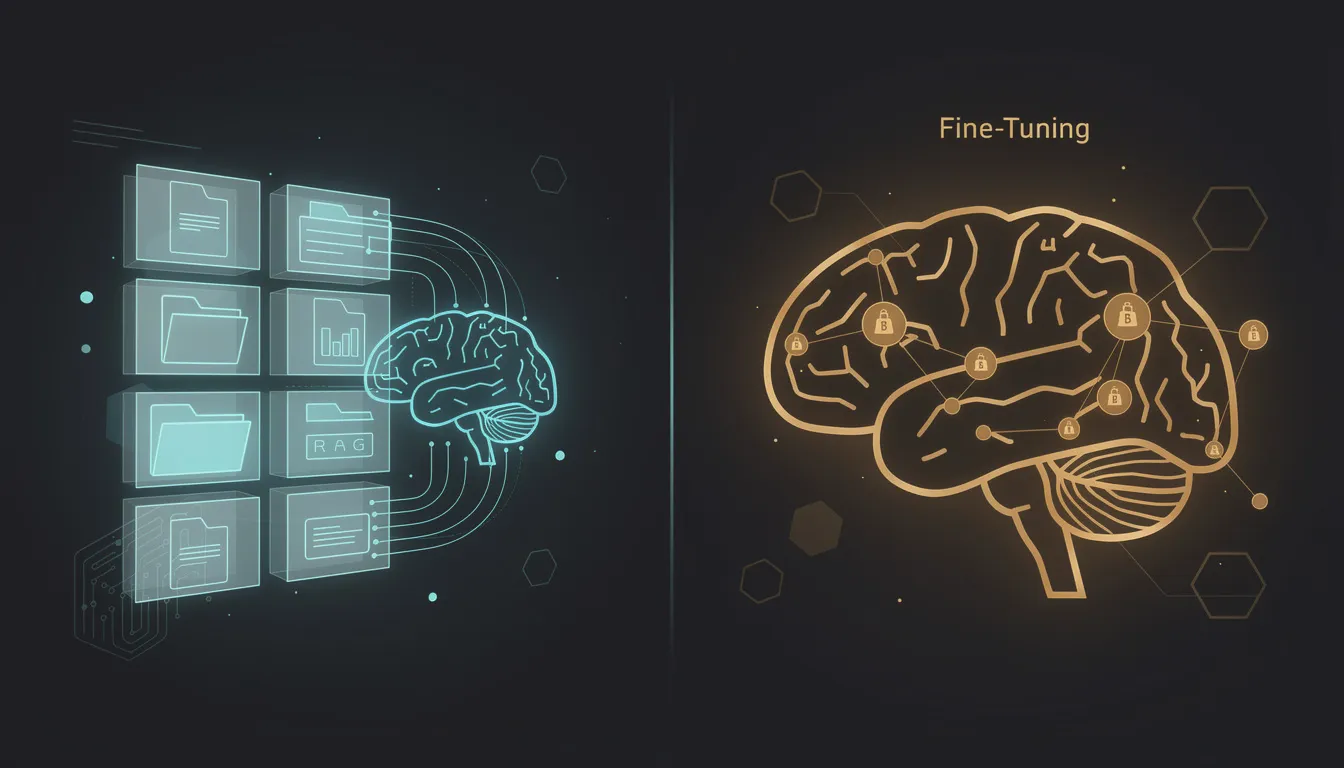

RAG vs Fine-Tuning: Choosing the Right Approach for Your LLM Use Case

RAG and fine-tuning are both legitimate approaches for building LLM-powered products. They solve fundamentally different problems. Choosing the wrong one wastes months — not because the implementation fails outright, but because you end up with a system that technically works but does not solve the actual problem you needed it to solve.

This guide explains both approaches clearly, identifies when each is appropriate, and gives you a four-question framework for making the right call before you commit to an architecture.

How LLMs Work Out of the Box

To understand when RAG and fine-tuning are useful, it helps to understand the limitations of base LLMs first.

A large language model like GPT-4 is trained on a massive corpus of text from the internet, books, and other sources. That training process bakes a representation of the world's knowledge into the model's parameters — billions of weights that encode relationships between concepts, facts, and language patterns.

This creates three important limitations:

Knowledge cutoff. The model knows nothing about events or documents that postdate its training cutoff. If your use case involves recent information — current pricing, recent regulatory changes, new product documentation — the base model will not have it.

No access to private data. Your internal knowledge base, your customers' data, your company's documents — none of this was in the training corpus. A base LLM has no knowledge of your specific domain, your product, or your clients.

Hallucination risk. When a model does not know something, it often generates a plausible-sounding answer anyway. In a consumer context this is annoying. In a B2B context involving legal, medical, financial, or product-critical information, it is a serious problem.

Both RAG and fine-tuning address these limitations in different ways. Understanding which limitation you are primarily solving tells you which approach to use.

What is RAG and When to Use It

Retrieval-Augmented Generation is a pattern where, instead of relying solely on what the model has memorized, you retrieve relevant information from an external knowledge source at query time and include it in the prompt as context.

How RAG Works

The implementation has three stages:

Indexing: Your documents (PDFs, web pages, database records, help articles, internal wikis) are chunked into smaller pieces — typically 300-1500 tokens each. Each chunk is converted into a numerical vector (an embedding) using an embedding model. These vectors are stored in a vector database alongside the original text.

Retrieval: When a user asks a question, the question is also converted into an embedding using the same model. The vector database finds the stored chunks whose embeddings are most similar to the query embedding (measured by cosine similarity or dot product). The top-k most relevant chunks are retrieved.

Generation: The retrieved chunks are inserted into the prompt sent to the LLM: "Based on the following context: [retrieved chunks], answer this question: [user query]." The model generates a response grounded in the retrieved content rather than solely in its trained parameters.

When RAG is the Right Choice

Dynamic or frequently updated data. If your knowledge base changes regularly — product pricing, team member information, current inventory, recent news — RAG is the correct approach. You update the vector index when the data changes, and the model's responses immediately reflect those updates. No retraining required.

Proprietary documents and internal knowledge. Your company's internal documentation, client contracts, support ticket history, sales playbooks — this data can never be in a base model's training set. RAG is the only way to give the model access to it.

When accuracy and auditability matter. RAG allows you to show the user which source documents were used to generate a response. This is critical in domains where users need to verify claims — legal, medical, compliance, financial advice. The model can even quote directly from the retrieved text, making responses auditable.

When you have limited training data. Fine-tuning requires a meaningful dataset of high-quality examples. RAG has no such requirement — if you have the documents, you can build the index.

RAG Limitations

Retrieval quality is a ceiling. If the right chunk is not retrieved, the model cannot generate a correct response no matter how capable it is. Poor chunking strategy, weak embedding models, or misleading queries can all cause retrieval failures. Evaluating and improving retrieval quality is an ongoing engineering task.

Latency. RAG adds at least one additional API call (the vector search) and increases the length of prompts. For high-frequency or latency-sensitive applications, this overhead matters.

Context window constraints. You can only retrieve so many chunks before the prompt exceeds the model's context window. For very broad queries that touch many documents, RAG can struggle.

What is Fine-Tuning and When to Use It

Fine-tuning is the process of taking a pre-trained model and continuing to train it on a smaller, domain-specific dataset. The model's parameters are updated based on your examples, and the result is a model that behaves differently — often better for your specific use case — than the base model.

How Fine-Tuning Works

You prepare a dataset of input-output pairs that represent the behavior you want. For a support bot, these might be pairs of customer questions and ideal responses. For a document drafting tool, they might be pairs of brief instructions and fully drafted documents.

This dataset is used in a training process (supervised fine-tuning) where the model's weights are adjusted to minimize the difference between its outputs and the "correct" outputs in your dataset. OpenAI's fine-tuning API makes this accessible without requiring ML infrastructure — you upload your dataset, start a fine-tuning job, and receive a custom model ID when complete.

When Fine-Tuning is the Right Choice

Consistent output format. If your application requires the model to always return a specific structure — a JSON object with exact keys, a document in a specific template, a response following a particular formula — fine-tuning teaches the model this format reliably. Prompt engineering can achieve this partially, but fine-tuning achieves it more consistently and at lower token cost per call.

Specific tone, style, or voice. If your product requires the model to write in a particular brand voice, communicate with a specific persona, or match the style of your existing content, fine-tuning on examples of that style teaches it much more effectively than prompt instructions.

Specialized domain vocabulary. In highly specialized fields — medical coding, legal drafting, financial analysis, engineering documentation — the base model may use imprecise terminology or miss domain-specific conventions. Fine-tuning on expert examples improves this.

Cost and latency optimization. A fine-tuned smaller model (like GPT-4o-mini) can often match the quality of a larger base model on a specific task, at a fraction of the cost per token. For high-volume applications where the same type of query runs thousands of times per day, this is significant.

Fine-Tuning Limitations

It does not solve knowledge problems. This is the most common misconception. Fine-tuning teaches the model how to behave, not what to know. If you fine-tune a model on your support documentation, it will learn the tone and format of those responses — but it will still hallucinate facts about your product when queried on topics not covered in its training data. Fine-tuning is not a substitute for RAG when the goal is factual accuracy over dynamic data.

Data requirements. Effective fine-tuning typically requires hundreds to thousands of high-quality examples. Collecting and labeling that data is non-trivial. Low-quality training data produces worse outcomes than a well-prompted base model.

Training cost and cycle time. A fine-tuning run on OpenAI's API for a meaningful dataset costs in the range of $50-500 depending on dataset size. More importantly, the cycle of prepare data → train → evaluate → adjust → retrain is slow. For a product that is still evolving, fine-tuning cycles can slow iteration significantly.

No real-time knowledge updates. Once trained, a fine-tuned model does not know about changes to your data. Every knowledge update requires a new training run.

The Decision Framework

Run your use case through these four questions:

1. Is the primary challenge that the model lacks knowledge of specific information? If yes — the model needs to know about your products, your documents, recent events, your clients' data — use RAG. Fine-tuning will not solve a knowledge problem.

2. Is the primary challenge inconsistent behavior, style, or output format? If yes — the model writes in the wrong tone, ignores formatting requirements, or produces structurally inconsistent outputs — consider fine-tuning. RAG does not improve behavioral consistency.

3. Does the data change frequently? If yes, use RAG. Fine-tuning a model every time your documentation is updated is not operationally feasible.

4. Do you have hundreds of high-quality labeled examples? If no, start with RAG plus careful prompt engineering. Fine-tuning without sufficient high-quality data is likely to underperform a well-prompted base model.

The Hybrid Approach

For mature AI-powered products, the two approaches are often complementary rather than mutually exclusive.

Fine-tune for behavior, RAG for knowledge. Fine-tune your model to respond in the correct format, tone, and domain vocabulary. Then use RAG to provide it with the specific information it needs to answer accurately. This is common in enterprise deployments where consistency of output (a compliance requirement) and accuracy over dynamic data are both required.

A practical example: a legal document drafting tool. Fine-tune on examples of well-structured legal documents in your jurisdiction and practice area — this teaches the model the correct document structure, legal phrasing, and professional tone. Then use RAG to retrieve the specific laws, precedents, and contract clauses relevant to the current document. The fine-tuned model produces correctly-structured output; the retrieved context makes it accurate.

This combination is more complex and expensive to build and maintain, but it is the architecture that the best-performing domain-specific LLM applications use.

Common Misconceptions About Each

"Fine-tuning will make the model know our proprietary data." No. It will make the model behave like your proprietary data, which is different. It does not store factual information reliably — it stores behavioral patterns. Use RAG for factual retrieval.

"RAG is just a workaround until fine-tuning improves." No. RAG is the correct architecture for any application that needs to respond based on dynamic, private, or frequently updated information. Even as LLM capabilities improve, RAG serves a different function than fine-tuning and will remain relevant.

"We need a lot of data before we can use AI in our product." For fine-tuning, yes. For RAG, the barrier is much lower — if you have documentation, you have enough to start. A vector index of 50 well-written help articles is enough to build a useful support assistant.

"Fine-tuning is always cheaper than prompting." Sometimes, but only at scale. The cost of data collection, training runs, and ongoing maintenance is substantial. For most early-stage products, optimized prompting with a strong base model is both cheaper and faster to iterate on.

The right choice between RAG and fine-tuning comes down to whether you are solving a knowledge problem or a behavior problem. Most B2B AI features are knowledge problems. Most start with RAG — and add fine-tuning only when behavioral consistency becomes a bottleneck.

If you are designing an LLM-powered feature and want to work through the architecture together, I offer a free AI consultation call.

Book your free AI consultation with Mehdi Yatrib at yatrib.me

Written by Mehdi Yatrib — Indie Maker & Consultant based in Casablanca, Morocco.

Work with me on Artificial Intelligence